AI

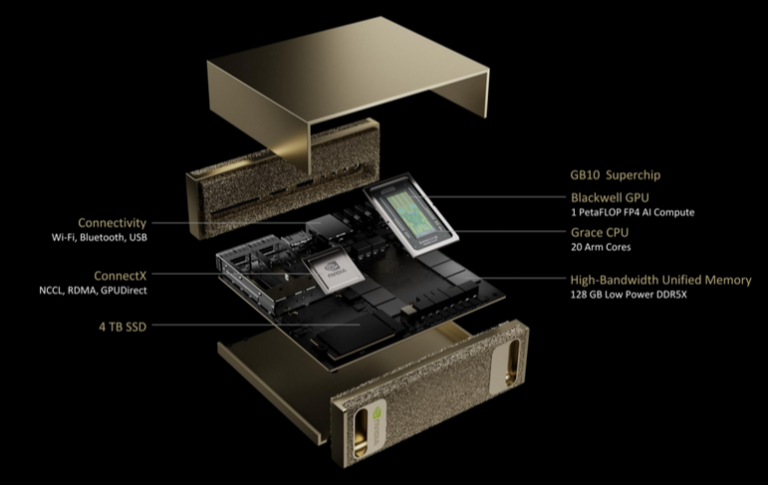

Nvidia Digits $3000 AI Supercomputer

With VRAM being king in the AI world, people started buying Apple computers as their RAM is integrated and used both by the CPU and GPU. How else would you get 128gb of RAM so “cheaply” where a good portion of that could be used by the GPU? This seemed like a more cost effective way of getting a large pool of VRAM, and as a plus you could do so in a smaller size with less power and technical hurdles (like when trying to build a 4 GPU rig).

Nvidia surprised us by announcing an ARM based computer for $3000 that comes with 128gb of unified memory at a price about $1700 less than you would pay from Apple.

This computer is said to be able to handle models up to 200 billion parameters in size, which is simply great.

We hope this product won’t be in so high demand that it will be hard to get a hold of.

Discuss on the Gear Rumors Forum

Stable Diffusion 3

The open source, locally ran software Stable Diffusion has announced their next version which is not yet available to download. Current versions of Stable Diffusion don’t produce text coherently but this new versions appears to change that significantly, amongst some other incremental improvements to image generation, especially with multiple subjects.

Comment on the Gear Rumors Forums

Rabbit R1

The $200 Rabbit R1 is getting all of the hype, but is it just hype? There have been mixed responses to this device with some people saying it’s useless and others saying it’s the way of the future. Their keynote certainly wasn’t reassuring.

Rabbit makes it clear that the R1 is not a phone replacement. It’s a pocketable AI assistant. Gee, that kind of sounds like what phones can do though, doesn’t it?

Despite all of this talk about large language models (LLMs), rabbit introduces a new term: large action model. It’s supposed do things for you. Make reservations. Book a flight. Make recommendations. Get a recipe. It’s trying to go one step further than current LLMs and act on the information provided.

We do have to ultimately praise Rabbit. They made a relatively affordable device that at the very least with influence phone makers to give us more AI functionality in our phones.

Visit Rabbit’s website for more information.